Background

Over the past couple of months, I worked with an e-commerce startup with a B2B offering to build Customer Success (CS) Dashboards for their clients that they will use to assess this company’s performance. Customer Success Dashboards are perpetual A/B tests in some sense because they present the performance with and without the use of a company's product as a component in their clients' sites. They did have an existing dashboard that they were upgrading from, but it was very different in terms of content and architecture so not much could be reused. In terms of the tech stack, they'd just moved all their data from MySQL to PostGreSQL (Postgres), mainly to improve DB performance so I was engaged to do the following.

- Write queries to extract metrics (daily, weekly, monthly etc.) from transactional tables.

- Create a staging area (tables/views) to store these metrics for easy retrieval from the dashboard, preferably using a single-line-query.

- Write ETL functions and scripts to populate the above tables/views.

- Display the data - at this point, they've built a simple dashboard in C# (their language of choice), because it's just a couple of small tables. In future, though, they're planning higher fidelity dashboards (with charts and stuff) and I might use Google Data Studio for those if I'm engaged to build them mainly because it’s free and the learning curve is not very steep.

- Their customer success team also had formulae for projections so I had to figure out how to calculate these from the above metrics. As is recommended, these calculations were done in the frontend, thereby separating the concerns of compute vs. storage.

The Retrospective

Extracting the metrics

As mentioned above, they'd just moved from MySQL to Postgres, so I had to first convert the existing data extraction queries to be Postgres-compatible. Coincidentally, I'm most comfortable with Postgres (out of all the dialects of SQL), having worked extensively, and most recently, with its Massively Parallel Processing (MPP) avatar, Greenplum (GPDB). In fact, I might scale to GPDB if further data warehousing requirements arise, especially with higher data-volume. Coming back to the task at hand, there are off-the-shelf "converters" that change code from one dialect of SQL to another but they don't typically take care of nuances (e. g., the syntactical differences while handling substrings). The other changes they do offer are fairly straightforward so one doesn't necessarily need these OTS products to perform this transformation. In my case, this preliminary task also acted as a welcome refresher, which was an added benefit.

As it happens, these queries are not just for the dashboard but the metrics are currently updated on Google Sheets from where the CS teams share them with some clients, both periodically and on-demand. So these queries proved useful for some BAU tasks as well.

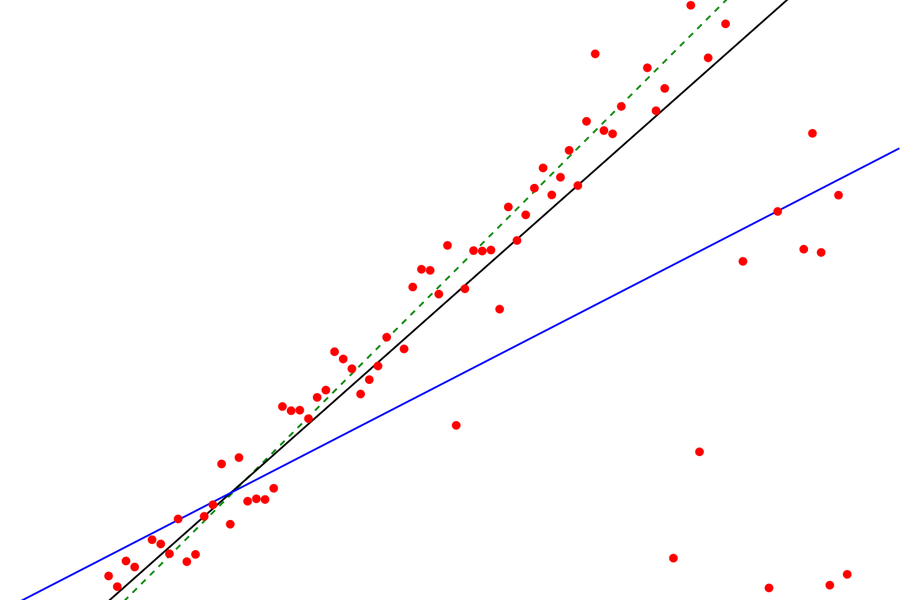

Unique Visitors vs. Unique Visits

The metrics typically calculate visitor counts of various web pages, conversions to sales, and revenue from the conversions, in the A/B format as indicated above. In SQL, the simplest representation of this would be COUNT(DISTINCT (user-id)). This eliminates duplicates over any time period so the daily count cannot be added up to return the weekly or monthly counts because the daily counts added up are the visit counts. Whereas if we run the same query for a few days, that eliminates duplicates and counts the number of visitors. So if the same user comes to your site on 10 different days in a month, your monthly visitor count will be 1 and visit count will be 10.

This difference occurred to me only toward the end of my stint so I’ve told the tech guy whom I worked with and asked him to talk to the business and understand what they want to present. To be sure, I did think of this earlier but was misled by the terminology I used. The second and further visits are actually repeats (which we might want to count) but I referred to them as duplicates (which we, subconsciously, try to eliminate).

So I wrote queries to calculate the counts for various temporal buckets for a given date (using UNION ALL). If we were counting visits, and not visitors, we could just calculate and store the daily figures and aggregate them before display. This could be beneficial in more ways than one: first, the queries for update and retrieval would be simpler. Second, whether we use a dashboard application or any standard web development framework to build the frontend, these date-based aggregations should be reasonably straightforward.

Staging the metrics

I've queried Materialized Views in the past but haven’t created or maintained them. Upon researching further and looking them up in my reference copy of Designing Data-Intensive Applications by Martin Kleppmann, I thought we could use Materialized Views instead of tables to store the metrics. I was planning for them to be refreshed based on insert/update triggers in the parent-tables and thought that we won't need jobs and could plan real-time updates. But upon more research, I found that we can't insert data into a materialized view and can only re-create it using its original query. This meant that we had to use a view or table for this data instead. We decided to go with a table in the end because it’s easy to insert/update into, maintain, and integrate with other downstream systems.

Dynamism within structure: SQL Functions

We needed to run the above queries to extract data from parent-tables that contain data about “events” taking place on each client’s website. We have one event table (with the same schema) for each client, named tb_<client-id>. So the same query needs to be executed against each of these tables for a given date and we must update the client-id and date along with the corresponding figures extracted from here into the metrics table. This calls for dynamism within structure and that’s what Dynamic SQL offers.

The quintessential construct of Dynamic SQL is the function. In this case, we must write a function that accepts client-id and date and runs the above query. In SQL, while defining a function, we must stipulate what it returns. That is, if the function is for insert/update/delete, then it won’t return a data output, so we must say it ‘RETURNS VOID’. In our case, their tech associate wanted control over when and how he’ll execute the insert transactions, orchestrating them with other writes that they perform. My function, therefore, must select the above data, which means we say it ‘RETURNS TABLE’, whose columns are the same as the columns of the select query at its core.

SQL functions allow you to provide the table name as parameter but passing a string that needs to be concatenated with something else to form the table name is not straightforward. There is a way to get table name from the information_schema tables, assign it to a variable, and use the variable in the core-query. This is typically done in a ‘for’ loop where the query for getting the table name is the condition and when it’s met, you run your core-query with the output of the conditional-query as your table name. The loop helps you connect the two queries and pass the table name between them. The information_schema tables are system tables, though, and they won’t know what your table name contains (client-id as tb_<client-id> in our case). Fortunately, though, I had created a table with the client-ids and the corresponding table names just for my reference and that came in handy here. Technically the core-query can be executed any number of times, once for each table returned by the conditional-query but in this case, as it happens, the client-id passed to the function will return only one table so the core-query will run only once with each invocation of the function.

Executing the functions

For each client, there are 30 functions based on the number and type of metric and the device used by the end user. This behoves the need for a script that can be scheduled as a (cron) job that will execute all the functions for each client. So, much like the functions it houses, it needs to accept client-id and date and run all the functions. The easiest way to do this is to run the functions as psql commands within a shell (bash) script.

Moral of the story: Even today's modern data pipelines can be built, and will function reliably, with the oldest data management workhorses : SQL & Unix (shell). :)

More such content...

Data Pipeline for Customer Success Dashboards

When less is more but bigger is better